Irregular spatial data

Here, we develop a neural estimator for a spatial Gaussian process model with exponential covariance function and unknown range parameter

Package dependencies

using NeuralEstimators

using Flux

using GraphNeuralNetworks

using Distributions: Uniform

using CairoMakie

using Distances

using Folds # parallel simulation (start Julia with --threads=auto)

using LinearAlgebra # Cholesky factorisation

using Statistics: meanTo improve computational efficiency, various GPU backends are supported. Once the relevant package is loaded and a compatible GPU is available, it will be used automatically:

using CUDA, cuDNNusing AMDGPUusing Metalusing oneAPISampling parameters

The overall workflow follows previous examples, with two key additional considerations. First, if inference is to be made from a single spatial data set collected at fixed locations, training data can be simulated at those same locations. However, if the estimator is intended for multiple spatial data sets with varying configurations, it should be trained on a diverse set of configurations, which can be sampled using a spatial point process such as maternclusterprocess. Second, spatial data should be stored as a graph using spatialgraph.

As in the gridded example, simulation from Gaussian processes involves expensive intermediate objects (Cholesky factors). Here, we additionally store the spatial graphs needed for graph convolutions. We define a custom type Parameters subtyping AbstractParameterSet:

struct Parameters <: AbstractParameterSet

θ # parameters

L # Cholesky factors

g # spatial graphs

S # spatial locations

endWe define two constructors: one that accepts an integer and samples from the prior (used during training), and one that accepts a parameter matrix and spatial locations directly (possibly used for parametric bootstrap):

function sampler(K::Integer)

θ = 0.5 * rand(1, K)

# Sample spatial configurations from a Matérn cluster process on [0, 1]²

n = rand(200:300, K)

λ = rand(Uniform(10, 50), K)

S = [maternclusterprocess(λ = λ[k], μ = n[k]/λ[k]) for k ∈ 1:K]

Parameters(θ, S)

end

function Parameters(θ::Matrix, S)

# Covariance matrices and Cholesky factors

L = Folds.map(axes(θ, 2)) do k

D = pairwise(Euclidean(), S[k], dims = 1)

Σ = Symmetric(exp.(-D ./ θ[k]))

cholesky(Σ).L

end

# Spatial graphs

g = spatialgraph.(S)

Parameters(θ, L, g, S)

endSimulating data

function simulator(parameters::Parameters, m)

K = size(parameters, 2)

m = rand(m, K)

map(1:K) do k

L = parameters.L[k]

g = parameters.g[k]

n = size(L, 1)

Z = L * randn(n, m[k])

spatialgraph(g, Z)

end

end

simulator(parameters::Parameters, m::Integer = 1) = simulator(parameters, range(m, m))Constructing the neural network

We use a GNN architecture tailored to isotropic spatial dependence models; for further details, see Sainsbury-Dale et al. (2025, Sec. 2.2). We also employ a sparse approximation of the empirical variogram as an expert summary statistic (Gerber and Nychka, 2021).

d = 1 # dimension of the parameter vector θ

num_summaries = 3d # number of summary statistics for θ

# Spatial weight functions: continuous surrogates for 0-1 basis functions

h_max = 0.15 # maximum distance to consider

q = 10 # output dimension of the spatial weights

w = KernelWeights(h_max, q)

# Propagation module

propagation = Chain(

SpatialGraphConv(1 => q, relu, w = w, w_out = q),

SpatialGraphConv(q => q, relu, w = w, w_out = q)

)

# Readout module

readout = GlobalPool(mean)

# Inner network

ψ = GNNSummary(propagation, readout)

# Expert summary statistic: empirical variogram

S = NeighbourhoodVariogram(h_max, q)

# Outer network

ϕ = Chain(

Dense(2q, 128, relu),

Dense(128, 128, relu),

Dense(128, num_summaries)

)

# DeepSet object

network = DeepSet(ψ, ϕ; S = S)Constructing the neural estimator

We now construct a neural estimator by wrapping the neural network in the subtype corresponding to the intended inferential method:

estimator = PointEstimator(network, d; num_summaries = num_summaries)estimator = PosteriorEstimator(network, d; num_summaries = num_summaries)estimator = RatioEstimator(network, d; num_summaries = num_summaries)Training the estimator

K = 1000

θ_train = sampler(K)

θ_val = sampler(K)

estimator = train(estimator, θ_train, θ_val, simulator, epochs = 10)The empirical risk (average loss) over the training and validation sets can be plotted using plotrisk.

One may wish to save a trained estimator and load it in a later session: see Saving and loading estimators for details on how this can be done.

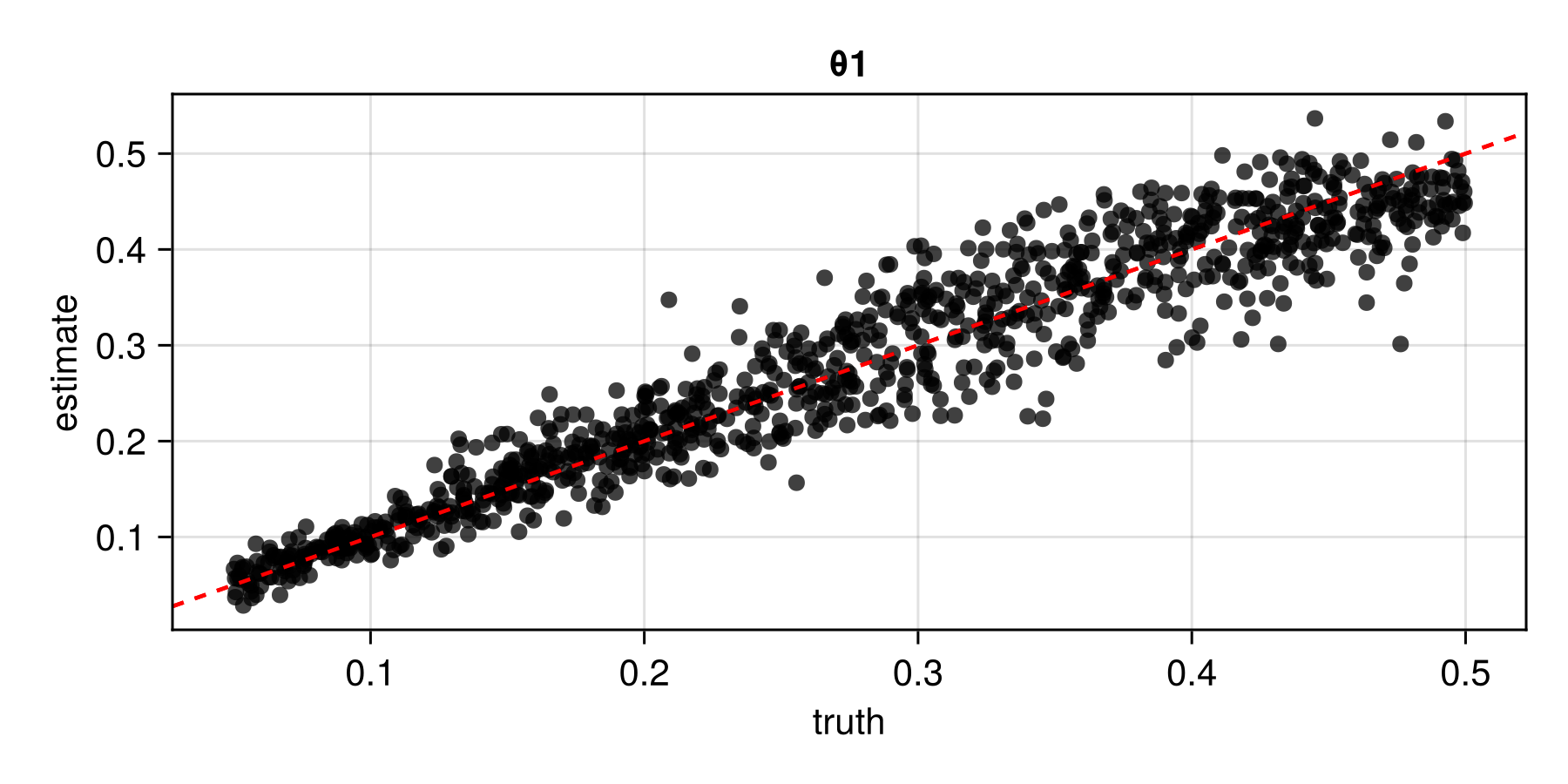

Assessing the estimator

The function assess can be used to assess the trained estimator:

θ_test = sampler(1000) # test parameters

Z_test = simulator(θ_test) # test data

assessment = assess(estimator, θ_test, Z_test)The resulting Assessment object contains ground-truth parameters, estimates, and other quantities that can be used to compute quantitative and qualitative diagnostics:

bias(assessment)

rmse(assessment)

risk(assessment)

plot(assessment)

Applying the estimator to observed data

Once an estimator is deemed to be well calibrated, it may be applied to observed data (below, we use simulated data as a stand-in for observed data). Since the estimator was trained on spatial configurations in the unit square, the spatial coordinates of observed data should be scaled to lie within this domain; estimates of any range parameters are then scaled back accordingly.

parameters = sampler(1) # ground truth (not known in practice)

θ = parameters.θ # true parameters

S = parameters.S # "observed" locations

Z = simulator(parameters) # stand-in for real dataestimate(estimator, Z) # point estimatesampleposterior(estimator, Z) # posterior samplesampleposterior(estimator, Z) # posterior sample